The Reliability Crisis: The Illusion of Competence

In the deployment of Large Language Models for high-stakes reasoning, a critical failure mode persists: the "hallucination of competence." Standard interpretability techniques rely on an axiomatic assumption: if a truth-value can be linearly decoded from a hidden state (Decodability), the model "knows" this truth and is utilizing it (Causality).

This specification invalidates that axiom.

Based on rigorous analysis of Counting ViTs, we demonstrate that Decodability is not isomorphic to Causality. We identified two distinct regimes of failure that standard auditing misses:

- The "Phantom Readout" Regime (High Decodability, Low Causality): In the final layers, tokens often contain highly accurate information that is functionally inert. The model has "crystallized" the answer into memory but has ceased reasoning.

- The "Dark Computation" Regime (Low Decodability, High Causality): In the middle layers, tokens exert profound causal influence—patching them flips the output logic—yet linear probes fail to extract meaningful information. The computation is "kinetic"—actively moving through the attention mechanism—but not yet readable.

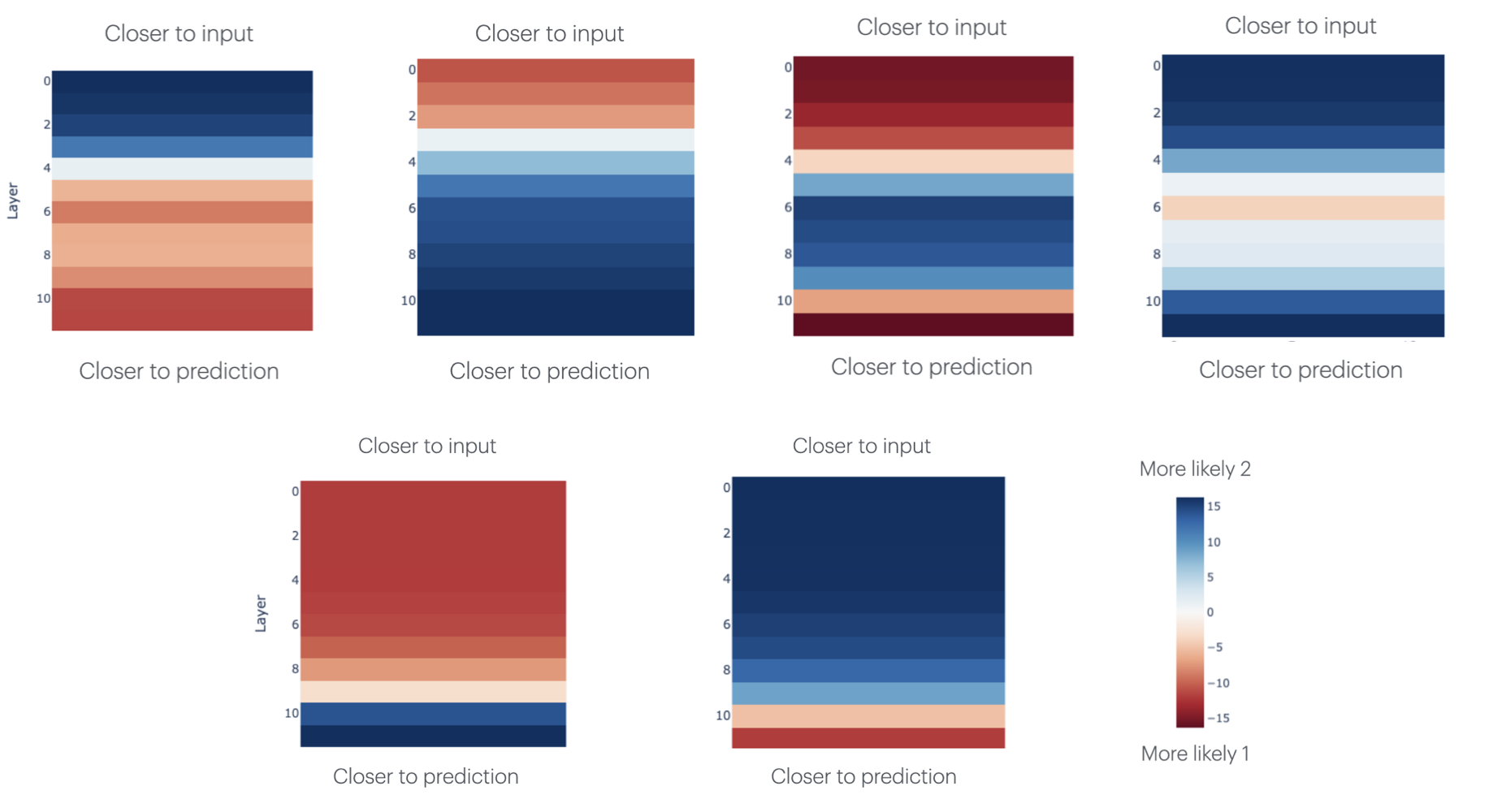

Figure 1: The Causal Intervention Operator. Transplants between "Clean" and "Corrupted" runs reveal that information flow is often orthogonal to representation.

The Metanthropic Thesis: Kinetic vs. Potential

To resolve this paradox, the Metanthropic Self-Correcting Reasoning Engine (MS-CRE) abandons the unitary view of "knowledge" in favor of a thermodynamic framework:

1. Kinetic Information (Data in Transit)

- Definition: Defined by its ability to do work on the output (High Causal Efficacy).

- Role: Active reasoning and decision branching.

- Signature: High Patching Impact , Low Probing Accuracy.

2. Potential Information (Data in Storage)

- Definition: Defined by its ease of retrieval (High Decodability).

- Role: Memory and context storage ("The Residue of Thought").

- Signature: Low Patching Impact , High Probing Accuracy .

Experimental Validation: The "Holographic Transfer"

We deployed the Causal Intervention Operator across 12 layers of a Vision Transformer. The results exposed a phenomenon we term Holographic State Transfer.

In the middle layers (6-9), spatial tokens cease to be local feature detectors and become Kinetic Bus Nodes. Patching a single object token from a 2-object image into a 1-object image forces the model to predict "2", even though the second object is physically missing.

Crucially, standard linear probes fail to decode this "count=2" information from the token (Low Potential), yet the causal impact is maximal (High Kinetic). This proves that reasoning is encoded in the gradients of the attention mechanism, not just the static embeddings.

Figure 2: The Kinetic Signature. Note the profound causal influence of spatial tokens in the middle layers (6-9), contrasted with the "CLS Collapse" in the final layers.

Module Specification: The KP-IDP Controller

The Kinetic-Potential Information Disentanglement Protocol (KP-IDP) is implemented as a lightweight auxiliary controller fused into the Transformer's residual stream. It operates as a "Dual-State Monitor."

The Phase-Shift Gate

The controller monitors the Phase Transition of information from the Kinetic Regime (Layers 6-9) to the Potential Regime (Layers 10-12).

- CASE A (Valid): High Kinetic (Mid) High Potential (Final).

- Action: Pass.

- CASE B (Hallucination): Low Kinetic (Mid) High Potential (Final).

- Diagnosis: The answer appeared without computation.

- Action: Reject & Resample.

- CASE C (Confusion): High Kinetic (Mid) Low Potential (Final).

- Diagnosis: The model "thought" hard but reached no conclusion.

- Action: Inject "Chain of Thought" token.

Conclusion

KP-IDP redefines hallucination not as a factual error, but as a Thermodynamic Violation (Potential Energy Kinetic Work). This allows us to detect ungrounded outputs without needing an external source of truth, simply by verifying the physics of the model's own thought process.